OpenAI has announced that it’s replacing the default model in ChatGPT with GPT-5.5 Instant, which it said produces faster responses, shorter answers, and fewer incorrect claims.

The model follows GPT-5.3 Instant, introduced in March, and is designed for everyday tasks such as writing, analysis, and general queries. OpenAI states in a blog post that “accuracy” is the model’s core selling point.

“Instant is now more dependable, with significant improvements in factuality across the board and the largest gains in domains where accuracy matters most,” the company writes.

A focus on everyday use

To rewind a little, OpenAI launched its flagship GPT-5.5 model in April, dubbing it “a new class of intelligence” designed to tackle more complex reasoning tasks. GPT-5.5 Instant sits alongside it as a lighter variant, handling routine queries while more demanding work can be passed to more premium models.

That positioning builds on changes OpenAI introduced with GPT-5.3 Instant in March. At the time, the company said the model would reduce what it described as “moralizing” responses and reduce unnecessary refusals, aiming for more direct answers to user queries.

GPT-5.5 Instant carries that general ethos forward. The emphasis is less on big bells-and-whistles features than on how ChatGPT behaves in day-to-day use — it wants to be liked.

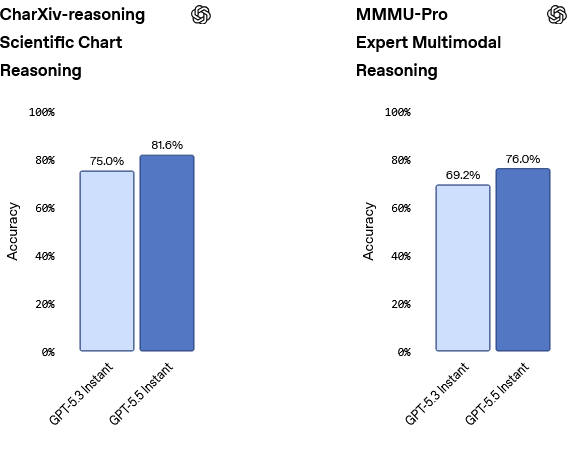

These improvements are reflected in gains on evaluations across visual reasoning, math, and science. On the CharXiv scientific chart reasoning benchmark, for example, GPT-5.5 Instant scores 81.6%, up from 75.0% for GPT-5.3 Instant. A similar lift appears on MMMU-Pro, a multimodal reasoning benchmark developed by academic researchers, where it reaches 76.0% compared with 69.2%.

It’s worth noting that these results are based on OpenAI’s own reporting, and the company hasn’t provided broader comparisons with competing models.

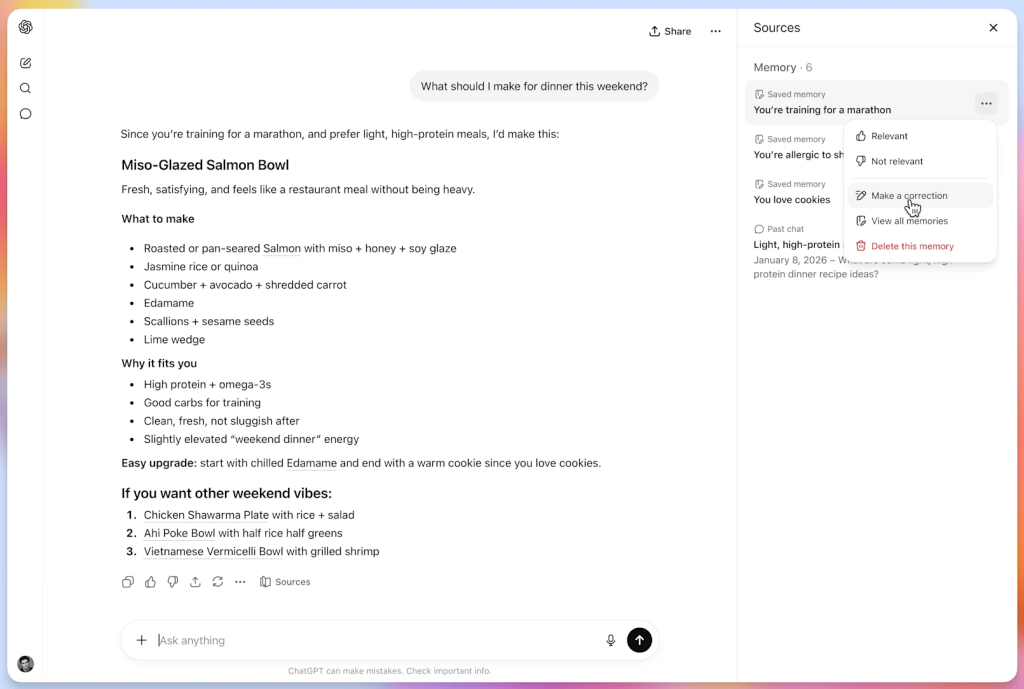

Alongside the model launch, OpenAI is placing more emphasis on how ChatGPT uses and explains personal data.

As before, the model can draw on personal context, including past conversations, uploaded files, and connected services. However, OpenAI says it’s now introducing “memory sources” across all ChatGPT models, which show which inputs were used in a response and allow users to remove them.

“When a response is personalized, you can see what context was used, such as saved memories or past chats, and delete or correct it if something is outdated or no longer relevant,” OpenAI writes.

OpenAI said the feature is intended to make personalization easier to understand, though it may not capture every input behind a response. In some cases, it will surface only the most relevant past chats or memories rather than everything the system referenced, with the company saying it plans to expand that visibility over time.

Competing models and availability

OpenAI isn’t alone in pushing faster, lower-cost models for day-to-day use. Google has Gemini Flash for lower-latency tasks, while Anthropic offers Claude Haiku for faster, cheaper responses.

GPT-5.5 Instant sits in the same category, designed to handle most user requests, while more complex tasks are handled by heavier models when needed.

Pricing isn’t so much of a factor with GPT-5.5 Instant, given that it’s positioned as the default model inside ChatGPT, where usage is bundled into subscription tiers rather than exposed at the model level. More powerful models remain available to developers through the API, where cost and performance trade-offs matter more in production.

GPT-5.5 Instant is rolling as the default model in ChatGPT now.

The post OpenAI rolls out GPT-5.5 Instant as default ChatGPT model, promises more accurate responses