For this 50-year-old company, AI is “just a tool.”

At SAS Innovate 2026 in Grapevine, Texas, the 50-year-old, privately held analytics company showcased agentic workflows, digital twins built with Unreal Engine, and its quantum computing efforts. But the pitch to customers is decidedly not about AI itself. It’s about making AI useful for them.

“Every breakthrough technology follows the same arc,” SAS CTO Bryan Harris said in his keynote. “It solves a problem, it reshapes society, and eventually it fades into the background of everyday life.

“Yesterday it was the internet, and today it’s AI. And tomorrow, I can assure you it will be something else. […] The only thing that outlasts every innovation is people.”

This is the theme that ran throughout the conversations and announcements at the event. AI is, without a doubt, central to its roadmap, but for SAS, it’s just one feature among many, sitting alongside its existing optimization, machine learning, computer vision, and forecasting tools.

“To us, it’s just a tool.”

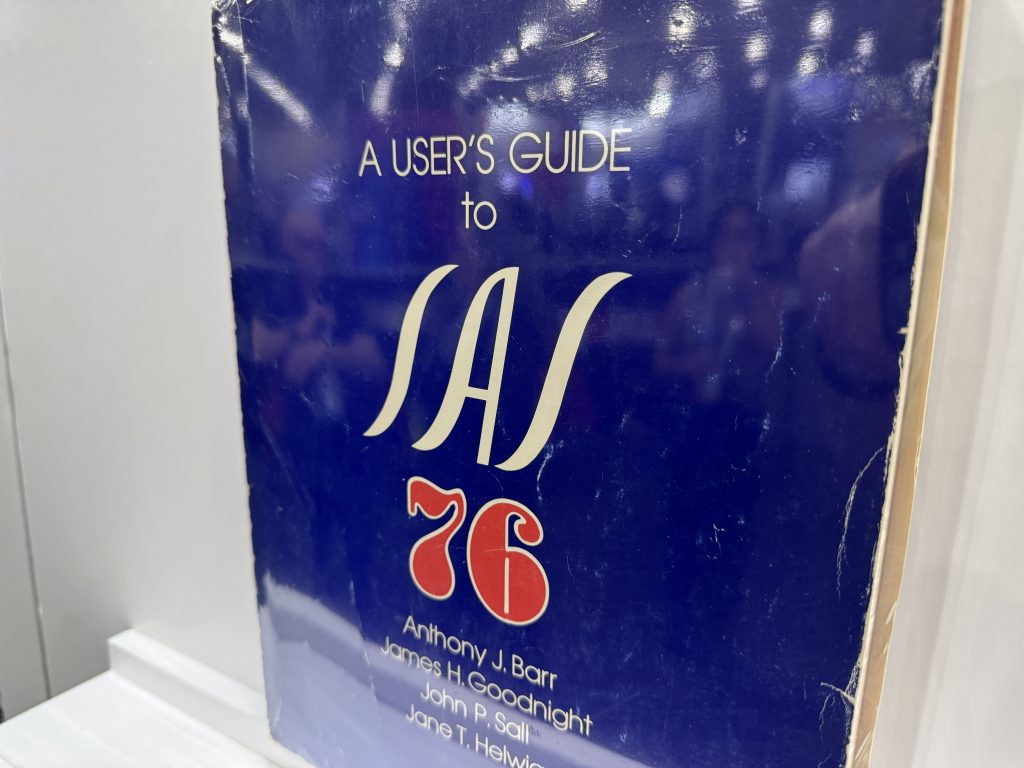

Udo Sglavo, SAS’s VP of applied AI and modeling, traces this philosophy back to the company’s origins. SAS, after all, was started in the 1970s by a small team analyzing agricultural data at North Carolina State University.

“SAS has made really good progress for 50 years by focusing on domain questions,” Sglavo tells The New Stack. “It was not about creating the technology. It was really about, ‘Can we solve a specific business question, industry question?'”

When large language models arrived, SAS considered building its own, as some competitors did, but decided to stick with what Sglavo calls an “agnostic technology” approach.

“AI will change again. We will see different waves of different AI techniques coming in,” Sglavo says. “And we will always say, ‘Look, it doesn’t matter to us.’ To us, it’s just a tool that we are using to solve the problem.”

In his keynote, Harris walked through those first 50 years of SAS and noted that this idea of neutrality was always at the core of the company’s offering, no matter whether that’s the SAS programming language that turned data into decisions, its “multi-vendor architecture” in the 1980s that let SAS run on mainframes, Unix, and PCs, or a multi-cloud architecture that brought the same approach to AWS, GCP, and on-prem environments once cloud computing arrived.

The current chapter is what Sglavo calls a “multi-large language model type of environment.”

“When we go to a German insurance company, and they are a Microsoft shop, we can’t come in and say, ‘We want you to use this large language model,'” Sglavo says. “They’ve made this decision already.”

What agents need

The gap between agentic AI hype and production deployment is still wide in the enterprise. Brett Wujek, SAS’s head of product strategy for next-generation AI, believes the biggest misconception he encounters is “that the agents actually know anything.”

An agent’s LLM handles natural language processing really well, he says, but “the tools and resources are really what drive the robustness and reliability and the accuracy of the actions that the agent actually carries out.”

Harris framed this on the main stage as a question of trust. Deterministic systems, with their fixed rules and predictable outputs, are the foundation of enterprise decision-making. Since LLM-based agents are non-deterministic, “three different runs can produce three different outputs,” he told the audience. Enterprises need both, but the non-deterministic parts need guardrails, and specifically verification and validation steps, to work at scale.

SAS’s response is to build MCP servers on top of its Viya cloud platform, making its analytics, governance, and decisioning capabilities available to agents as tools that may be orchestrated entirely by other vendors.

“I never hear from customers, ‘Well, we don’t trust the results coming from SAS,'” Wujek says. The idea here is for the MCP server to allow the company to extend that trust to whatever agentic framework a customer uses, whether or not SAS manages the agent.

“We’re now at that tipping point of coming back around on, all right, how can we actually use this technology in a really trusted and structured way?” — Brett Wujek

“We’re now at that tipping point of coming back around on, all right, how can we actually use this technology in a really trusted and structured way?” Wujek tells The New Stack.

Part of that trust comes from what’s under the hood. Luis Flynn, who leads product marketing for SAS’s packaged AI solutions and agent-based analytics, tells The New Stack that the company’s analytics aren’t thin wrappers around open source libraries. “What we still do best is compiled C code,” Flynn says. “We’re not spitting out just stitched-together Python code.”

Python users can invoke SAS capabilities in a Pythonic way, he says, but the underpinnings are highly performant algorithms, not scripts. When an MCP server calls a SAS forecasting model, that’s what it’s calling.

Boring = money

SAS has to provide its Fortune 500 customers who rely on its services to make billion-dollar decisions with dependable data. But those customers are also looking at new AI tools now on the market to push their data capabilities to the next level. SAS is where it is today because it has made pragmatic decisions about new technologies in the past, and sometimes that means a flashy demo is necessary, too.

“When technology is early in its life cycle, you pick the use cases which are impressive… eventually you go back to the use cases which are extremely boring, because that’s where the money is.”

— Udo Sglavo

“When technology is early in its life cycle, you pick the use cases which are impressive,” Sglavo says. “And eventually you go back to the use cases which are extremely boring, because that’s where the money is.”

In his keynote, Harris showed photorealistic digital twins built with Epic Games and Unreal Engine that simulate manufacturing floors and sterilization facilities, as well as a repeatable four-phase framework for AI-assisted software development within SAS itself, where a developer’s value, for example, shifts from writing code to context engineering.

SAS is also looking further ahead and investing in quantum computing. A small team inside the company is building a toolbox that abstracts away hardware differences across quantum vendors. But even there, the company focuses on its core strengths.

Bill Wisotsky, SAS’s principal quantum systems architect, describes an insurance company that came to SAS with an optimization problem they believed required quantum computing. Before the team explored a quantum approach, SAS’s classical experts reformulated the problem and solved it in 90 seconds.

“SAS is not a quantum computing company,” Wisotsky says. “As a company, we want to provide value.”

Governance as competitive advantage

Kristi Boyd, SAS’s senior trustworthy AI specialist, tells The New Stack that the governance challenge is inseparable from the use case. “With generative AI, the technology is what it is, but how you apply it is where you’re actually introducing the risks,” Boyd says.

She points to two SAS customers with opposite approaches to that risk. PZU, a roughly 200-year-old insurer in Poland, wanted to be seen as an innovation leader and built a governance framework that let it lean into risk and run more pilots. An anonymous financial institution in the U.K., on the other hand, took the opposite approach, focusing on reliability over novelty to deliberately position itself as a third- or fourth-wave technology adopter. Both needed governance infrastructure, but for fundamentally different reasons.

Navigator, SAS’s newest governance product, will become generally available in summer 2026. SAS designed it to be vendor-agnostic and standalone, capable of governing SAS models or models built in Snowflake or procured from third parties.

Sglavo argues governance will become a differentiator in its own right. “I actually believe this will be a competitive advantage eventually,” he says. “You can say, if you’re using our software, we can guarantee that we are not training on dodgy data.”

The patience question

Harris opened his keynote by naming the anxiety behind all of this. “We are in a crisis, a crisis of confidence of human ingenuity,” he says. “It’s not a collapse in the belief that AI will matter. It’s a collapse in the belief that people will matter.”

Sglavo sees that anxiety playing out in SAS’s customer base. “Most of the time it’s the C-level which says we got to do AI, […] so people get nervous,” he says. The result: lots of prototyping, not much production deployment.

“It’s not a collapse in the belief that AI will matter. It’s a collapse in the belief that people will matter.” — Bryan Harris

Flynn worries about what happens when that pattern takes hold. “If organizations use it as the easy button, they atrophy,” he says. “They never build the fundamentals, and they never think pragmatically.”

SAS’s private ownership lets it resist that pressure. “We can move a little bit slower and take more time with these kinds of decisions,” Sglavo says. The company watched competitors rush into building their own LLMs and chose not to.

Harris told the audience that “there’s no question that this is a pivotal moment, but it’s just a moment.” SAS is clearly betting that its focus on the fundamentals will help it win. That has worked for 50 years, after all.

The post “To us, it’s just a tool”: How SAS is selling AI to the Fortune 500